Historical:SoC 2008 Project OpenGL Preview

The OpenGL Preview project aims to create a fast previewer for Hugin, reducing the time taken to redraw the preview to real time rates. After that, the new previewer can be made more interactive than the current one. The project is James Legg's Google Summer of Code 2008 project, mentored by Pablo d'Angelo.

Image Transformations

The new previewer will have to approximate the remapping of the images to fit on low resolution meshes. There are two methods for this:

Remapping Vertices

Taking a point in the input image, we can find where the point is transformed to on the final panorama. Therefore, we can take a uniform grid over an input image, and create a mesh that shows remaps this grid into the output projection.

Disadvantages:

- This method suffers when the input image crosses over the +/-180° boundary in projections such as Equirectangular Projection, as there will be faces connecting one end to the other, and nothing between the edges of those faces and the actual boundary. We must split the mesh so that it each part is continuous, which means some faces will have to be defined off the edge of the panorama.

- The poles of an equirectangular image will also cause similar problems. At a pole, the image should cover the whole width of the panorama, but this is only one point in the input image. Vertices in the mesh near the pole are define a face that does not cover the whole row.

- The detail in the faces might not match up well with the area of the panorama. The faces are all of different sizes and some mappings may put the details in parts that can't be seen as easily as the lower resolution parts.

Advantages:

- The mesh resembles the output projection well, and covers up roughly the same area as the correct projection. The edges of the mesh lies along the edges of the image, so we don't need to worry about what happens outside of it.

Remapping Texture Coordinates

Alternatively, given a point on the final panorama, we can calculate what part of an input image belongs there. Therefore, we can take a uniform grid over the panorama, and map the input image across it.

Disadvantages:

- This mapping breaks down at the borders of the input image. The borders of the input image may cross the output mesh at any position, so we need to draw the image with a transparent border.

- We must also calculate the extent of the images in the result so the mesh only covers the minimal area.

- Some faces of the minimal bounding rectangle still don't contain any of the input image at all, processing them would be a waste of time.

Advantages:

- There are no problems at the poles and edges of the panorama.

- The output is of uniform quality throughout, as the faces always have the same density.

Distortions Comparison

To check that these transformation methods do not distort the image too much, I devised a test. Using Nona to produce a coordinate transformation map from input image coordinates to output image coordinates, and similarly creating a projection that goes the other way, I tried these transformations in Blender.

I projected a cylindrical panorama, 360° wide, and with a pixel resolution 4 times as wide as it is tall, into a fisheye image pointing at the lower pole of the input image, 270° wide, and square.

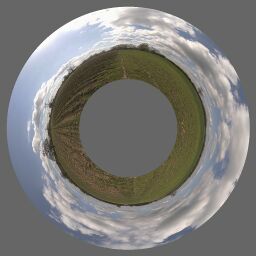

| Vertex mapping Approximate Transformation

This mesh has 32 columns of faces, and 8 rows, across the input image. Since the image is 4 times as wide as it is tall, this means there are 256 faces which cover an equal amount of the input image. |

Transformation with Nona

This is the remapping that the other images are approximating. |

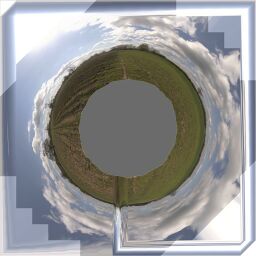

Texture Coordinate mapping Approximate Transformation

This mesh has 16 rows of faces and 16 columns, spread equally over the output panorama, so there are 256 faces which cover an equal amount of the output. Note that at this point I have not attempted to correct the texture coordinates around the boundary of the input image. |

Another test performed was mapping a rectilinear image to the zenith of an equirectangular image.

Modules

As there are many good ideas for the future of a graphically-accelerated Hugin after this project is complete (See the discussion on the mailing list), it is clear that a lot of development can occur using OpenGL and the new preview after I have completed this project. Therefore it is especially important that I provide clean, highly modular and extensible code for future use. Here is how the modules and their interfaces look:

- Texture Manager

- The texture manager is built on top of Hugin's ImageCache, and will perform a similar function but using graphics memory and textures. It is able to set the active texture to one that represents an image. It adjusts the size of the textures it is storing so they fill a sensible amount of memory and provide a even level of detail for the images. This is partially complete, it works fine with real time photometric adjustment (only corrects exposure and white balance) and, photometric correction is in progress, and a logarithmic display for high dynamic range images may be yet to come.

- Mesh Manager

- The mesh manager sets up lists of instructions for the graphics system to draw a given image, remapped how it should be in the panorama's output. It uses a mesh remapper for every image to find what faces it needs. This has been implemented.

- Mesh Remapper

- This provides the coordinates of points in a mesh. It splits the image into faces that approximate the transformation using the remapping with vertices method above. However, the rectangles of the input image that have their corners transformed to make the face vertices are sized adaptively: in the areas where the remapping is almost linear there are less faces. Also the size of the faces is limited when they become too small to be noticeable. This is partially complete.

- Renderer

- This is responsible for drawing the panorama, although it only really compiles a list of render layers and draws them when asked to. Currently it just draws all the active images, as the render layers are not implemented yet.

- Render Layers

- Everything the renderer draws would have been put there by a render layer. As the name suggests, they are ordered and can cover each other up. At the end of the project there will obviously be a layer to draw the selected images. Other layers provide indication of image outlines, or perhaps redraw an image in a specific way. Not implemented yet.

- Image Render Hooks

- When drawing, we may want to do something different for a selection of the images. A render hook can be set up that changes how an image is rendered. It would provide callbacks for before and after drawing, a condition to drop an image from rendering altogether, or a replacement function for drawing it. An example would be to provide different blending modes. We could subtract an image from the ones behind it by dropping it from the images render layer, and then drawing it again in a different render layer, but subtracting it from the layers beneath. Alternatively, if we wanted something drawn in place but semitransparent, we could turn on blending before it is drawn and turn it off afterwards. These are not implemented yet.

- Viewer

- The viewer combines together a renderer and a some basic interface for the tools. It tells the renderer when to render, but just before it does so it lets the mesh and texture managers update. It also stores the view state object. It will be able convert between a mouse position and a position in the panorama. It is implemented as a wxWidget.

- Input Manager

- An input manager gets all keyboard and mouse events the viewer sees and passes them to the correct tool. Tools register events they want. When tools are disabled they should give up their events, which can then be taken by a newly activated tool. Not implemented yet

- Tools

- Tools can be enabled or disabled. When switching states they must set up or turn off events in the input manager, render layers, and image render hooks. They get access to the panorama data and viewer data. None are implemented yet. A image drag / recentre tool, and a cropping tool is planned. Other tools could be built to work with control points and selection of images for example.

- GLPreviewPanel

- This is a replacement PreviewPanel. It holds the viewer and gives it some screen space. It shows controls for the viewer and tools. It will keep an input manager to decide what to do with user input events. Some tools may be mutually exclusive, for example there might be a few that want to handle mouse events. The preview panel should turn off one tool before starting another in this case.

- View State

- As the user changes properties of the panorama, we want something to accept the modifications in real time to control what is drawn. This state should differ from the state of the panorama so the user can cancel their changes and make visible changes during dragging things with the mouse (without creating an undo step for each mouse motion). The ViewState object is what is used to hold the temporary state. It also updates to reflect the other conventional modifications. Finally, this can report what type of changes have been made to the panorama and therefore deduce what expensive calculations need to be done to get the preview up to date, and which things we previously calculated are still valid. It is implemented now.