Difference between revisions of "Historical:SoC 2008 Project OpenGL Preview"

James Legg (talk | contribs) m (Added to Community:Project category) |

James Legg (talk | contribs) (→Distortions Comparison: Added a comparison for a rectilinear image at the zenith -> equirectangular) |

||

| Line 51: | Line 51: | ||

|'''Texture Coordinate mapping Approximate Transformation''' | |'''Texture Coordinate mapping Approximate Transformation''' | ||

| − | This mesh | + | This mesh has 16 rows of faces and 16 columns, spread equally over the output panorama, so there are 256 faces which cover an equal amount of the output. |

Note that at this point I have not attempted to correct the texture coordinates around the boundary of the input image. | Note that at this point I have not attempted to correct the texture coordinates around the boundary of the input image. | ||

| + | |} | ||

| + | |||

| + | Another test performed was mapping a rectilinear image to the zenith of an equirectangular image. | ||

| + | {| border="1" | ||

| + | |[[Image:Blender_zenith_mesh_transform.png]] | ||

| + | |||

| + | '''Vertex mapping Approximate Transformation''' | ||

| + | |||

| + | This is the transformation performed by arranging vertices that cover a uniform grid along the input image. The mesh was 32 faces wide and 16 faces tall, and covered an input image that was 4:3. I removed a row of faces that went right across the image, connecting the left edge to the right edge. This was there since the left and right edge represent the same line through the input image, and the mapping ignores the discontinuity across that edge. If I use this method, I will have to draw the faces of that row twice, once for each side, using some extra vertices somewhere off the sides of the output, so that the mapping is continuous and the corners are not missing. | ||

| + | |||

| + | |- | ||

| + | |[[Image:Blender_zenith_transform_nona_output.png]] | ||

| + | |||

| + | '''Transformation with Nona''' | ||

| + | |||

| + | This is the mapping the other images are approximating. | ||

| + | |||

| + | |- | ||

| + | |[[Image:Blender_zenith_tex_coord_transform.png]] | ||

| + | |||

| + | '''Texture Coordinate mapping Approximate Transformation''' | ||

| + | |||

| + | This is the transformation performed by finding the points on the input image that are at the points on a grid over the output image. The grid was 64 faces wide and 8 tall. Two of the edges are bad because I couldn't get the negative coordinates (they were clamped to 0), the other two are reasonable because the grid points mapped to the correct places, even though some of them were outside of the input image. If I use this method, I should be able to get the negative coordinates easily and therefore the poles will work without much effort. | ||

| + | |||

|} | |} | ||

[[Category:Community:Project]] | [[Category:Community:Project]] | ||

Revision as of 11:26, 13 May 2008

The OpenGL Preview project aims to create a fast previewer for Hugin, reducing the time taken to redraw the preview to real time rates. After that, the new previewer can be made more interactive than the current one. The project is James Legg's Google Summer of Code 2008 project, mentored by Pablo d'Angelo.

Image Transformations

The new previewer will have to approximate the remapping of the images to fit on low resolution meshes. There are two methods for this:

Remapping Vertices

Taking a point in the input image, we can find where the point is transformed to on the final panorama. Therefore, we can take a uniform grid over an input image, and create a mesh that shows remaps this grid into the output projection.

Disadvantages:

- This method suffers when the input image crosses over the +/-180° boundary in projections such as Equirectangular Projection, as there will be faces connecting one end to the other, and nothing between the edges of those faces and the actual boundary. We must split the mesh so that it each part is continuous, which means some faces will have to be defined off the edge of the panorama.

- The poles of an equirectangular image will also cause similar problems. At a pole, the image should cover the whole width of the panorama, but this is only one point in the input image. Vertices in the mesh near the pole are define a face that does not cover the whole row.

- The detail in the faces might not match up well with the area of the panorama. The faces are all of different sizes and some mappings may put the details in parts that can't be seen as easily as the lower resolution parts.

Advantages:

- The mesh resembles the output projection well, and covers up roughly the same area as the correct projection. The edges of the mesh lies along the edges of the image, so we don't need to worry about what happens outside of it.

Remapping Texture Coordinates

Alternatively, given a point on the final panorama, we can calculate what part of an input image belongs there. Therefore, we can take a uniform grid over the panorama, and map the input image across it.

Disadvantages:

- This mapping breaks down at the borders of the input image. The borders of the input image may cross the output mesh at any position, so we need to draw the image with a transparent border.

- We must also calculate the extent of the images in the result so the mesh only covers the minimal area.

- Some faces of the minimal bounding rectangle still don't contain any of the input image at all, processing them would be a waste of time.

Advantages:

- There are no problems at the poles and edges of the panorama.

- The output is of uniform quality throughout, as the faces always have the same density.

Distortions Comparison

To check that these transformation methods do not distort the image too much, I devised a test. Using Nona to produce a coordinate transformation map from input image coordinates to output image coordinates, and similarly creating a projection that goes the other way, I tried these transformations in Blender.

I projected a cylindrical panorama, 360° wide, and with a pixel resolution 4 times as wide as it is tall, into a fisheye image pointing at the lower pole of the input image, 270° wide, and square.

| Vertex mapping Approximate Transformation

This mesh has 32 columns of faces, and 8 rows, across the input image. Since the image is 4 times as wide as it is tall, this means there are 256 faces which cover an equal amount of the input image. |

Transformation with Nona

This is the remapping that the other images are approximating. |

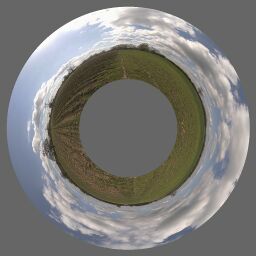

Texture Coordinate mapping Approximate Transformation

This mesh has 16 rows of faces and 16 columns, spread equally over the output panorama, so there are 256 faces which cover an equal amount of the output. Note that at this point I have not attempted to correct the texture coordinates around the boundary of the input image. |

Another test performed was mapping a rectilinear image to the zenith of an equirectangular image.